When IT Disasters Strike: Keep Calm and Communicate – Just ask GitLab

Airlines, take notes from GitLab. This is how you do a disaster.

We’ve been saying this for years, disasters are gonna happen. Not a week goes by without news of some airline outage, ransomware taking down a hospital or power failure taking down a major website.

This week saw (at least) two. Delta’s outage and GitLab’s employee accidentally deleting a database. Tough times for both in the news. Especially tough for Delta, whose outage was highlighted by POTUS himself on his twitter account. Fat chance that any company, big or small, can get by without anyone noticing their outage.

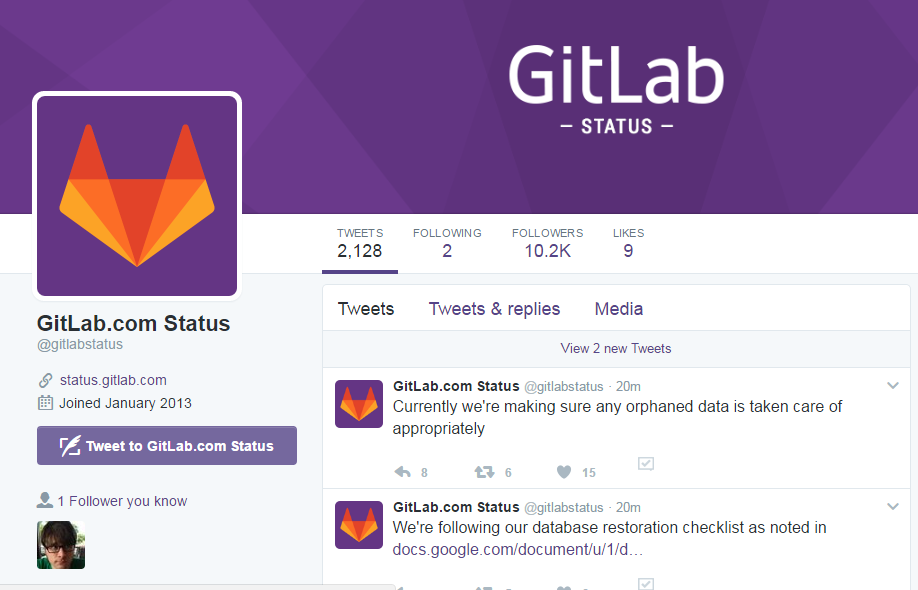

What happened post-outage, however, was particularly interesting this week. GitLab, according to Wikipedia, a web-based Git repository manager with wiki and issue tracking features, schooled the world on how to do an outage. How did they do it? And why am I writing this even as the site is still down? GitLab was proactive, honest and they provided minute by minute updates:

- Immediate mea culpa, and news of exactly what happened. Even though this was embarrassing.

- An open googledoc explaining exactly what stage they were in as part of the recovery, including details of the outage and resources.

- A live twitter “GitLab Status” feed of every step of the recovery and the status.

We don’t know what the GitLab datacenter looks like, but assuming it meets the basic criteria of a Zerto customer (virtualized on VMware or Hyper-V) we have a lot to offer here. File-level recovery and our point-in-time checkpoints could have helped recover the GitLab database much sooner. Our non-disruptive DR testing would have allowed for a full “dry-run” of the GitLab datacenter for DR preparedness. We wish we could have been there to help. Regardless, enterprises should take notes from this relatively small start-up on what customers expect from a company during an outage. GitLab set the gold standard today.